Aim for watercooler conversations: improve the ability to identify and understand errors of judgment and choice, in others and eventually in ourselves, by providing a richer and more precise language to discuss them.

Social scientists in the 1970s broadly accepted two ideas about human nature. First, people are generally rational, and their thinking is normally sound. Second, emotions such as fear, affection, and hatred explain most of the occasions on which people depart from rationality.

(a reference to another book) “Prospect Theory: an analysis of decision under risk”, a theory of choice that is by some counts more influential than our work on judgment, and is one of the foundations of behavioral economics.

Where we are now: the spontaneous search for an intuitive solution sometimes fails – neither an expert solution nor a heuristic answer comes to mind. In such cases we often find ourselves switching to a slower, more deliberate and effortful form of thinking. This is the slow thinking of the title. Fast thinking includes both variants of intuitive thought – the expert and the heuristic – as well as the entirely automatic mental activities of perception and memory, the operations that enable you to know there is lamp on your desk.

What comes next: why is it so difficult for us to think statistically? We easily think associatively, we think metaphorically, we think causally, but statistics requires thinking about many things at once, which is something that System 1 is not designed to do. We are prone to overestimate how much we understand about the world and to underestimate the role of change in events.

Part 1: Two Systems

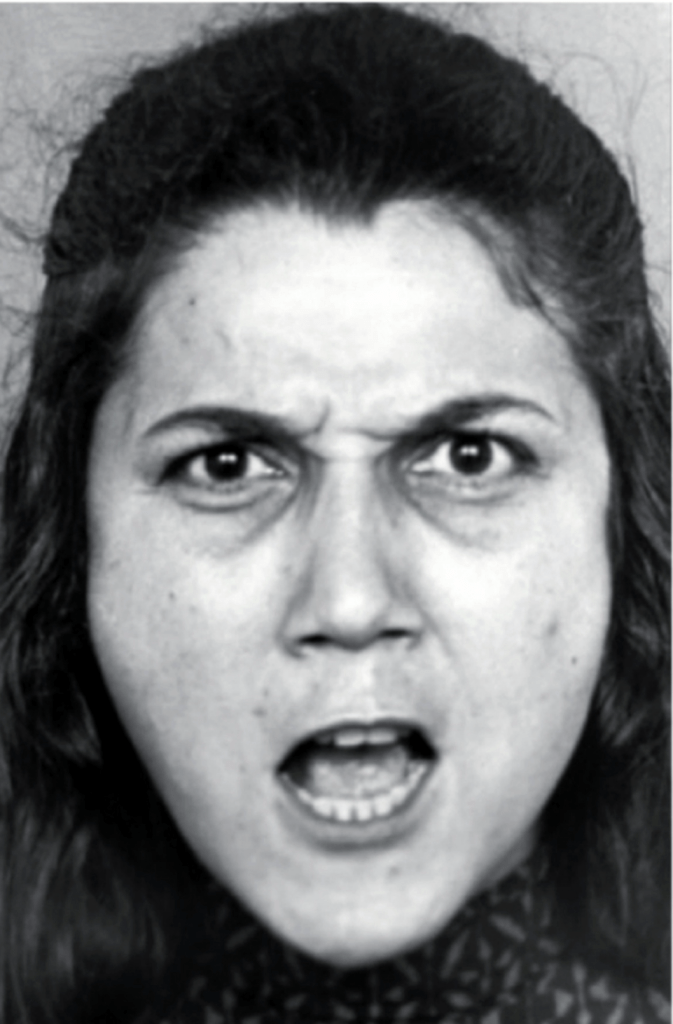

To observe your mind in automatic mode, glance at the image below:

You did not intend to assess her mood or to anticipate what she might do, and just creation to the picture did not have the feed of something you did. It just happened to you. It was an instance of fast thinking.

Now look at the following problem:

17 x 24

You experienced slow thinking as you proceeded through a sequence of steps. You first retrieved from memory the cognitive program for multiplication that you learned in school, then you implemented it.

Psychologists have been intensely interested for several decated in the two modes of thinking evoked by the picture of the angry woman and by the multiplication problem, and have offered many labels for them.

- System 1 operated automatically and quickly, with little or no effort and no sense of voluntary control.

- System 2 allocates attention to the effortful mental activities that demand it, including complex computations. The operations of System 2 are often associated with the subjective experience of agency, choice, and concentration.

System 1 run automatically and System 2 is normally in a comfortable low-effort mode, in which only a fraction of its capacity is engaged. System 1 continuously generates suggestions for System 2: impressions, intuitions, intentions, and feelings. If endorsed by System 2, impressions and intuitions turn into beliefs, and impulses turn into voluntary actions.

A general “law of least effort” applies to cognitive as well as physical exertion. The law asserts that if there are several ways of achieving the same goal, people will eventually gravitate to the least demanding course of action. In the economy of action, effort is a cost, and the acquisition of skill is driven by the balance of benefits and costs. Laziness is built deep into our nature.

Imagine that you are asked to retain a list of seven digits for a minute or two. You are told that remembering the digits is your top priority. While your attention is focused on the digits, you are offered a choice between two desserts: a sinful chocolate cake and a virtuous fruit salad. The evidence suggests that you would be more likely to select the tempting chocolate cake when your mind is loaded with digits. System 1 has more influence on behavior when System 2 is busy, and it has a sweet tooth.

Too much concern about how well one is doing is a task sometimes disrupts performance by loading short-term memory with pointless anxious thoughts. The conclusion is straightforward: self-control requires attention and effort. Another way of saying this is that controlling thoughts and behaviors is one of the tasks that System 2 performs.

The Lazy System 2:

One of the main functions of System 2 is to monitor and control thoughts and actions “suggested” by System 1, allowing some to be expressed directly in behavior and suppressing or modifying others.

For an example, here is a simple puzzle. Do not try to solve it but listen to your intuition:

A bat and ball cost $1.10.

The bat costs one dollar more than the ball.

How much does the ball cost?

A number came to your mind. The number, of course is 10: 10cents. The distinctive mark of this essay puzzle is that is evokes an answer that is intuitive, appealing, and wrong. Do the math, and you will see. If the ball costs 10cents, then the total cost will be $1.20 (10cents for the ball and $1.10 for the bat), not $1.10. The correct answer is 5cents. It is safe to assume that the intuitive answer also came to the mind of those who ended up with the correct number – they somehow managed to resist the intuition.

The associative machine: in the current view of how associative memory works, a great deal happens at once. An idea that has been activated does not merely evoke one other idea. It activates many ideas, which in turn activate others. Furthermore, only a few of the activated ideas will register in consciousness; most of the work of associative thinking is silent, hidden from our conscious selves. THe notion that we have limited access to the working of our minds is difficult to accept because, naturally, it is alien to our experience, but it is true: you know far less about yourself than you feel you do.

Cognitive ease: is anything new going on? Is there a threat? Are things going well? Should my attention be redirected? Is more effort needed for this task? you can think of a cockpit, with a set of dials that indicate the current values of each of these essential variables. The assessments are carried out automatically by System 1, and one of their functions is to determine whether extra effort is required from System 2.

When you ar in a state of cognitive ease, you are probably in a good mood, like what you see, believe what you hear, trust your intuitions, and feel that the current situation is comfortably familiar. You are also likely to be relatively casual and superficial in your thinking. When you feel strained, you are more likely to be vigilant and suspicious, invest more effort in what you are doing, feel less comfortable, and make fewer errors, but you also are less intuitive and less creative than usual.

The sense of ease of strain has multiple causes, and it is difficult to tense them apart. Difficult, but not impossible. People can overcome some of the superficial factors that produce illusions of truth when strongly motivated to do so. On most locations, however, the lazy System 2 will adopt the suggestions of System 1 and march on.

Strain and effort: cognitive strain, whatever its source, mobilizes System 2, which is more likely to reject the intuitive answer suggested by System 1.

Evidence that good mood, intuition, creativity, gullibility, and increased reliance on System 1 form a cluster. At the other pole, sadness, vigilance, suspicion, an analytic approach, and increased effort also go together. A happy mood loosens the control of System 2 over performance: when in a good mood, people become more intuitive and more creative but also less vigilant and more prone to logical errors. Here again, as in the mere exposure effect, the connection makes biological sense. A good mood is a signal that things are generally going well, the environment is safe, and it is all right to let one’s guard down. A bad mood indicates that things are not going very well, there may be a threat, and vigilance is required.

We next go into more detail of the wonders and limitation of what System 1 can do.

Assessing normality: the main function of System 1 is to maintain and update a model of your personal world, which represents what is normal in it. The model is constructed by associations that link ideas of circumstances, events, actions, and outcomes that co-occur with some regularity, either at the same time or within a relatively short interval.

The prominence of causal intuitions is recurrent theme in this book because people are prone to apply causal thinking inappropriately, to situations that require statistical reasoning. Statistical thinking derives conclusions about individual cases from properties of categories and ensembles. Unfortunately, System 1 does not have the capacity for this mode of reasoning; System 2 can learn to think statistically, but few people receive the necessary training.

System 1 does not keep track of alternatives that it rejects, or even of the fact that there were alternatives. Conscious doubt is not in the repertoire of System 1; it requires mainintaing incompatible interpretations in mind of the same time, which demands mental effort. Uncertainty and doubt are the domain of System 2.

The moral is significant: when System 2 is otherwise engaged, we will believe almost anything. System 1 is gullible and biased to believe, System 2 is in charge of doubting and unbelieving, but System 2 is sometimes busy, and often lazy. Indeed, there is evidence that people are more likely to be influence by empty persuasive messages, such as commercials, when they are tired and depleted.

The standard practice of open discussion give too much weight to the opinions of those who speak early and assertively, causing others to line up behind them.

Jumping to conclusions on the basis of limited evidence is so important to an understanding of intuitive thinking, and comes up so often in this book, that we will use a cumbersome abbreviation for it: WYSIATI, which stands for what you see is all there is. System 1 is radically insensitive to both the quality and the quantity of the information that gives rise to impressions and intuitions.

System 2 receives questions or generates them: in either case it directs attention and searches memory to find the answers. System 1 operates differently. It continuously monitors what is going on outside and inside the mind, and continuously generates assessment of various aspects of the situation without specific intention and with little or no effort.

The dominance of conclusions over arguments is most pronounced where emotions are involved. The psychologist Paul Slovic has proposed an affect heuristic in which people let their likes and dislikes determine their beliefs about the world. Your political preference determines the arguments that you find compelling.

Characteristics of System 1

- generates impressions, feelings, and inclinations; when endorsed by System 2 these become beliefs, attitudes, and intentions

- operates automatically and quickly, with little or no effort, and no sense of voluntary control

- can be programmed by System 2 to mobilize attention when a particular pattern is detected (search)

- executes skilled responses and generates skilled intuitions, after adequate training

- created a coherent pattern of activated ideas in associative memory

- links a sense of cognitive ease to illusions of truth, pleasant feelings, and reduced vigilance

- distinguishes the surprising from the normal

- infers and invents causes and intentions

- neglects ambiguity and suppresses doubt

- is biased to believe and confirm

- exaggerates emotional consistency (halo effect)

- focuses on existing evidence and ignores absent evidence (WYSIATI)

- generates a limited set of basic assessments

- represents sets by norms and prototypes, does not integrate

- matches intensities across scales (e.g. size to loudness)

- computes more than intended (mental shotgun)

- sometimes substitutes an easier question for a difficult one (heuristics)

- is more sensitive to changes than to states (prospect theory)

- overweight low probabilities

- shows diminishing sensitivity to quantity (psychophysics)

- responds more strongly to losses than to gains (loss aversion)

- frames decision problems narrowly, in isolation from one another

Part 2: Heuristics and Biases

Anchoring: the phenomenon we are studying is so common and so important in the everyday world that you should know its name: it is an anchoring effect. It occurs when people consider a particular value for an unknown quantity before estimating that quantity.

Two different mechanisms produce anchoring effects – one for each system. There is a form of anchoring that occurs in a deliberate process of adjustment, an operation of System 2. And there is anchoring that occurs by a priming effect, an automatic manifestation of System 1.

We see the same strategy at work in the negotiation over the price of a home, when the seller makes the first move by setting the list price. As in many other games, moving first is an advantage in single-issue negotiations – for example, when price is the only issue to be settled between a buyer and a seller. As you may have experienced when negotiating for the first time in a bazaar, the initial anchor has a powerful effect. My advice to students when I taught negotiations was that if you think the other side has made an outrageous proposal, you should not come back with an equally outrageous counteroffer, creating a gap that will be difficult to bridge in further negotiations. Instead you should make a scene, storm out or threaten to do so, and make it clear – to yourself as well as to the other side – that you will not continue the negotiation with that number on the table.

The effects of random anchors have much to tell us about the relationship between System 1 and System 2. Anchoring effects have always been studied in tasks of judgment and choice that are ultimately completed by System 2. However, System 2 works on data that is retrieved from memory, in an automatic and involuntary operation of System 1. System 2 is therefore susceptible to the biasing influence of anchors that make some information easier to retrieve. Furthermore, System 2 has no control over the effect and no knowledge of it.

The conclusion is that the ease with which instances come to mind is a System 1 heuristic, which is replaced by a focus on content when System 2 is more engaged. Multiple lines of evidence converge on the conclusion that people who let themselves be guided by System 1 are more strongly susceptible to availability biases than others who are in a state of higher vigilance.

Speaking of availability

“Because of the coincidence of two planes crashing last month, she now prefers to take the train. Traht’s silly. The risk hasn’t really changed; it is an availability bias.”

“He underestimates the risks of indoor pollution because there are few media stories on them. That’s an availability effect. He should look at the statistics.”

“She has been watching too many spy movies recently, so she’s seeing conspiracies everywhere.”

“The CEO has had several successes in a row, so failure doesen’t come easily to her mind. The availability bias is making her overconfident.”

The world in our heads is not a precise replica of reality; our expectations about the frequency of events are distorted by the prevalence of emotional intensity of the messages to which we are exposed.

“Risk” does not exist “out there”, independent of our minds and culture, waiting to be measured. Human beings have invented the concept of “risk” to help them understand nad cope with the dangers and uncertainties of life. Although these dangers are real, there is no such thing as “real risk” or “objective risk”.

There is one thing you can do when you have doubts about the quality of the evidence: let your judgments of probability stay close to the base rate. Don’t expect this exercise of discipline to be easy – it requires a significant effort of self-monitoring and self-control.

The essential keys to disciplined Bayesian reasoning can be simply summarized:

* Anchor your judgment of the probability of an outcome on a plausible base rate

* Question the diagnosticity of your evidence

Both ideas a straightforward. It came as a shock to me when I realized that I was never taught how to implement them, and that even now I find it unnatural to do so.

The judgments of probability that our respondents offered, in both the Tom W and Linda problems, corresponded precisely to judgments of representativeness (similarity to stereotypes). The uncritical substitution of plausibility for probability has pernicious effects on judgments when scenarios are used as tools of forecasting. Consider two scenarios, which were presented to different groups, with a request to evaluate their probability:

A massive flood somewhere in North America next year, in which more than 1000 people down

An earthquake in California sometime next year, causing a flood in which more than 1000 people down

The California earthquake scenarios is more plausible than the North America scenario, although its probability is certainly smaller. As expected, probability judgments were higher for the richer and more detailed scenario, contrary to logic. This is a trap for forecasters and their clients: adding detail to scenarios makes them more persuasive, but less likely to come true.

To appreciate the role of plausibility, consider the following questions:

Which alternative is more probable?

Mark has hair.

Mark has blond hair

and

Which alternative is more probable?

Jane is teacher.

Jane is a teacher and walks to work.

The two questions have the same logical structure as the Linda problem, but they cause no fallacy, because the more detailed outcome is only more detailed – it is not more plausible, or more coherent, or a better story. The evaluation of plausibility and coherence does not suggest and answer to the probability question. In the absence of a competing intuition, logic prevails.

The Linda problem and the dinnerware problem have exactly the same structure. Probability, like economic value, is a sum-like variable, as illustrated by this example:

probability (Linda is a teller) = probability (Linda is a feminist teller) + probability (Linda is non-feminist teller)

System 1 averages instead of adding.

One conclusion, which is not new, is that System 2 is not impressively alert.

Causes trump statistics

Consider the following scenarios and note your intuitive answer to the question.

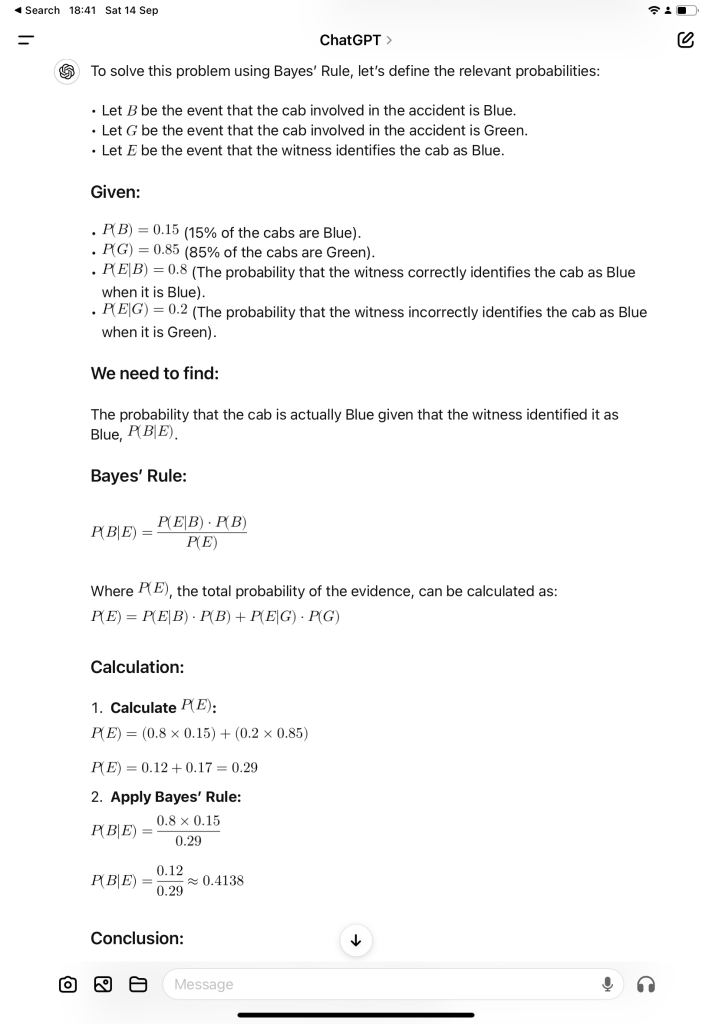

A cab was involved in a hit-and-run accident at night.

Two cab companies, the Green and the Blue, operate in the city.

You are given the following data:

* 85% of the cabs in the city are Green and 15% are Blue.

* A witness identified the cab a Blue. The court tested the reliability of the witness under the circumstances that existed on the night of the accident and concluded that the witness correctly identified each one of the two colors 80% of the time and failed 20% of the time.

What is the probability that the cab involved in the accident was Blue rather than Green?

Conclusion: the probability that the cab involved in the accident was Blue, given that the witness identified it as blue is approximately 0.414 or 41,4%.

System 1 can deal with stories in which the elements are causally linked, but it is weak in statistical reasoning.

The experiments shows that individuals feel relieved of responsibility when they know that others have heard the same request for help.

The test of learning psychology is whether your understanding of situation you encounter has changed not whether you have learned a new fact.

Regression to the mean:

important principle of skill training: rewards for improved performance work better than punishment of mistakes.

The instructor was right – but he was also completely wrong! His observation was astute and correct: occasions on which he praised a performance were likely to be followed by a disappointing performance, and pushments were typically followed by an improvement. But the inference he had drawn about the efficacy of reward and punishment was completely off the mark. What he had observed is known as regression to the mean, which is that case was due to random fluctuations in the quality of performance. Naturally, he praised on a caded whose performance was far better than average. But the cadet was probably just lucky on that particular attempt and therefore likely to deteriorate regardless of whether or not he was praised. Similarly, the instructor would shout into a cadet’s earphones only when the cadet’s performance was unusually bad and therefore likely to improve regardless of what the instructor did. The instructor had attached a causal interpretation to the inevitable fluctuations of random process.

Significant fact of the human condition: the feedback to which life exposes us is perverse. Because we tend to be nice to other people when they please us and naty when they do not, we are statistically punished for being nice and rewarded for being nasty.

A few years ago, John Brockman, who edits the online magazine Edge, asked a number of scients to report their “favorite equation”. These were my offerings:

success = talent + luck

great success = a little more talent + a lot more luck

Our mind is strongly biased toward causal explanations and does not deal well with the “mere statistics”. When our attention is called to an event, associative memory will look for its cause – more precisely, activation will automatically spread to any cause that is already stored in memory. Causal explanations will be evoked when regression is detected, but they will be wrong because the trught is that regression to the mean has an explanation but does not have a cause. The event that attracts our attention in the golfing tournament is the frequent deterioration of performance of the golfers who were successful on day 1. The best explanation of it is that those golfers were unusually lucky that day, but this explanation lucks the causal force that our minds prefer. Indeed, we pay people quite well to provide interesting explanations of regression effects. A business commentator who correctly announces that “the business did better this year because it had done poorly last year” is likely to have a short tenure on the air.

This is perhaps the best evidence we have for the role of substitution. People are asked for a prediction but they substitute an evaluation of the evidence, without noticing that the question they answer is not the one they were asked. This process is guaranteed to generate predictions that are systematically biased; they completely ignore regression to the mean.

The objections to the principle of moderating intuitive predictions must be take seriously, because absence of bias is not always what matters most. A preference for unbiased predictions is justified if all errors of prediction are treated alike, regardless of their direction. But there are situations in which one type of error is much worse than another. When a venture capitalist looks for “the next big thing” the risk of missing the next Google or Facebook is far more important than the risk of making a modest investment in a start-up that ultimately fails.

We are back on the law of small numbers. In effect, you have a smaller sample of information from Kim than from Jane, and extreme outcomes are much more likely to be observed in small samples. There is more luck in the outcomes of small samples.

Part 3 Overconfidence

The human mind does not deal well with nonevents. The fact that many of the important events that did occur involve choices further tempts you to exaggerate the role of skill and underestimate the part that luck played in the outcome.

A general limitation of the human mind is its imperfect ability to reconstruct past states of knowledge, or beliefs that have changed. Once you adopt a new view of the world (or of any part of it), you immediately lose much of your ability to recall what you used to believe before your mind changed.

(book reference “The Halo Effect”, by Philip Rosenzweig) concludes that stories of success and failure consistently exaggerate the impact of leadership style and management practices on firm outcomes, and thus their message is rarely useful.

The basic message of “Build to Last” and other similar books is that good managerial practices can be identified and that good practices will be rewarded by good results. Both messages are overstated. The comparison of firms that have been more or less successful is to a significant extent a comparison between firms that have been more or less lucky. Knowing the importance of luck, you should be particularly suspicious when highly consistent patterns emerge from the comparison of successful and less successful firms. In the presence of randomness, regular patterns can only be mirages. Because luck plays a large role, the quality of leadership and management practices cannot be inferred reliably from observations of success. And even if you had perfect foreknowledge that a CEO has brilliant vision and extraordinary competence, you still would be unable to predict how the company will perform with much better accuracy than the flip of a coin.

Confidence is a feeling, which reflects the coherence of the information and the cognitive ease of processing it. It is wise to take admissions of uncertainty seriously, but declarations of high confidence mainly tell you that an individual has constructed a coherent story in his mind, not necessarily that the story is true.

The illusion of skill is not only an individual aberration; it is deeply ingrained in the culture of the industry. Facts that challenge such basic assumptions – and thereby threaten people’s livelihood and self-esteem – are simply not absorbed.

Several studies have shown that human decision makers are inferior to a prediction formula even when they are given the score suggested by the formula! They feel that they can overrule the formula because they have additional information about the case, but they are wrong more often than not.

Another reason for the inferiority of expert judgment is that humans are incorrigible inconsistent in making summary judgments of completed information. When asked to evaluate the same information twice, they frequently give different answers.

The widespread inconsistency is probably due to extreme context dependency of System 1. We know from studies of priming that unnoticed stimuli in our environment have a substantial influence on our thoughts and actions. These influences fluctuate from moment to moment. The brief pleasure of a cool breeze on a hot day may make you slightly more positive and optimistic about whatever you are evaluating at the time. Thre prospects of a convict being granted parole may change significantly during the time that elapses between successive food breaks in the parole judges’ schedule. Because you have little direct knowledge of what goes on in your mind, you will never know that you might have made a different judgment or reached a different decision under very slightly different circumstances. Formulas do not suffer from such problems. Given the same input they always return the same answer.

The important conclusion from this research is that an algorithm that is constructed on the back of an envelope is often good enough to compete with an optimally weighted formula, and certainly good enough to outdo expert judgment.

Leader of firefighting teams: he followed them as they fought fires and later interviewed the leader about his thgouths as he made decisions. As Klein described it in our joint article, he and his collaborators investigated how the commanders could make good decisions without comparing options. THe initial hypothesis was that commanders would restrict their analysis to only a pair of options, but that hypothesis proved to be incorrect. In fact, the commanders usually generated only a single options, and that was all they needed. They could draw on the repertoire of patterns that they had compiled during more than a decade of both really and virtual experience to identify a plausible option, which they considered first. They evaluated this option by mentally simulating it to see if it would work in the situation they were facing … If the course of actions they were considering seemed appropriate, they would implement it. If it had shortcomings, they would modify it. If they could not easily modify it, they would turn to the next most plausible options that run through the same procedure until an acceptable course of action was found. Klein elaborated this description of decision making that he called the recognition-primed decision (RPD) model, which applies to firefighters but also describes expertise in other domains, including chess.

Definition of intuition: the situation has provided a cue; this cue has given the expert access to information stored in memory, and the information provides the answer. Intuition is nothing more and nothing less than recognition.

People’s confidence in a belief to two related impressions: cognitive ease and coherence. We are confident when the story we tell ourselves comes easily to mind, with no contradiction and no competing scenario. But ease and coherence do not guarantee that a belief held with confidence is true.

A mind that follows WYSIATI will achieve high confidence much too easily by ignoring what it does not know. It is therefore not surprising that many of us are prone to have high confidence in unfounded intuitions.

Two basic conditions for acquiring a skill:

- an environment that is sufficiently regular to be predictable

- an opportunity to learn these regularities through prolonged practice

Remember this rule: intuition cannot be trusted in the absence of stable regularities in the environment.

Whether professionals have a chance to develop intuitive expertise depends essentially on the quality and speed of feedback, as well as on sufficient opportunity to practice.

When evaluating expert intuition you should always consider whether there was an adequate opportunity to learn the cues, even in a regular environment.

In a less regular, or low-validity, environment, the heuristics of judgment are invoked. System 1 is often able to produce quick answers to difficult questions by substitution, creating coherence where there is none.

The evidence suggests that optimism is widespread, stubborn, and costly.

One of the lessons of the financial crisis that led to the Great Recession is that there are periods in which competition, among experts and among organizations, creates powerful forces that favor a collective blindness to risk and uncertainty.

When one has just had a door slammed in one’s face by an angry homemaker, the thought that “she was an awful woman” is clearly superior to “I am an inept salesperson.”

Part 4: Choices

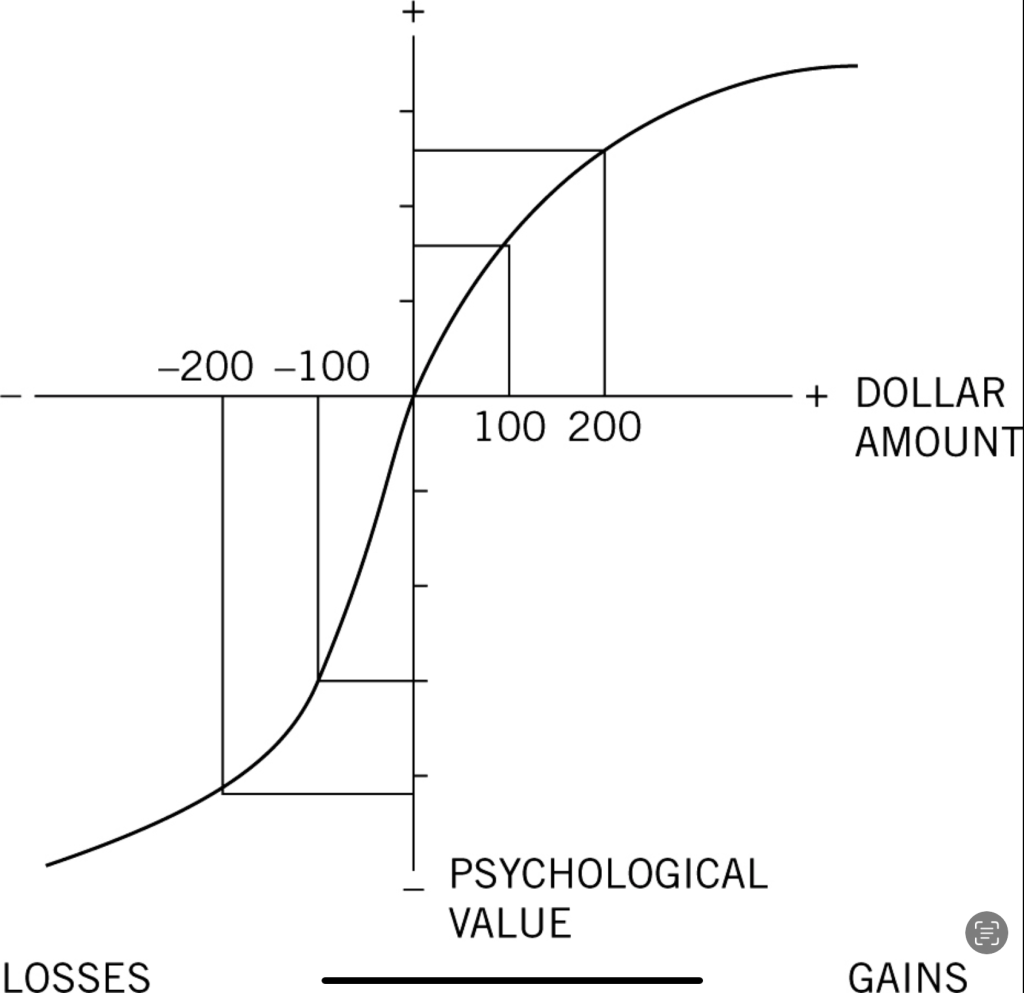

Prospect Theory:

Problem 1: Which do you choose?

Get $900 for sure OR 90% chance to get $1.000

Problem 2: Which do you choose?

Lose $900 for sure OR 90% chance to lose $1.000

You were probably risk averse in a problem 1, as is the great majority of people. The subjective value of a gain of $900 is certainly more than 90% of the value of a gain of $1.000. The risk-averse choice in this problem would not have surprised Bernoulli.

Now examined your preference in problem 2. If you are like most other people, you chose the gamble in this question. The explanation for this risk-seeking choice is the mirror image of the explanation of risk aversion in problem 1: the (negative) value of losing $900 is much more than 90% of the (negative) value of losing $1.000.

People become risk seeking when all their options are bad.

The reason you like the idea of gaining $100 and dislike the idea of losing $100 is not that these amounts change your wealth. You just like winning and dislike losing – and you almost certainly dislike losing more than you like winning.

The graph has two distinct parts, to the right and to the left of a neutral reference point. A salient feature is that it is S-shaped, which represents diminishing sensitivity for both gains and losses. Finally, the two curves of the S are not symmetrical. The slope of the function changes abruptly at the reference point: the response to losses is stronger than the response to corresponding gains. This is loss aversion.

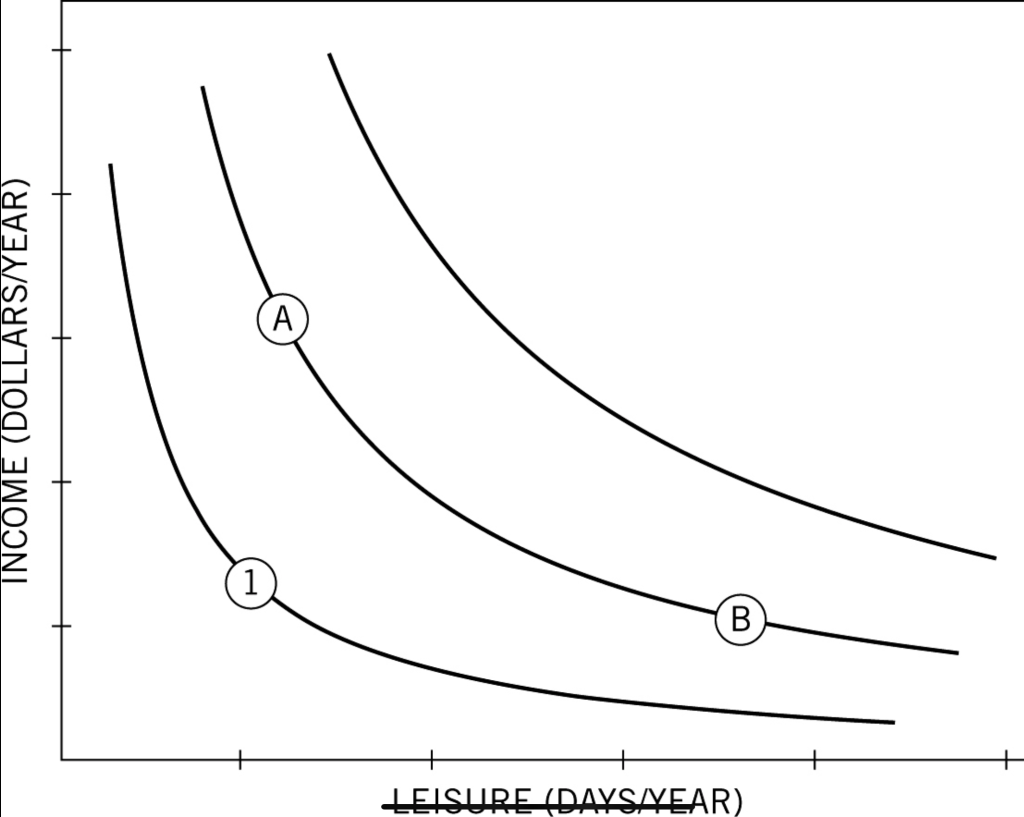

The below image displays an individuals’s “indifference map” for two goods:

The convex shape indicates diminishing marginal utility: the more leisure you have, the less you care for an extra day of it, and each added day is worth less than the one before. Similarly, the more income you have, the less you care for an extra dollar, and the amount you are willing to give up for an extra day of leisure increases.

What is missing from the figure is an indication of the individual’s current income and leisure. If you are a salaried employee, the terms of your employment specify a salary and a number of vacation days, which is a point on the map. This is your reference point, your status quo, but the figure does not show it. By failing to display it, the theorists who draw this figure invite you to believe that the reference point does not matter, but by now you know that of course it does.

Prospect theory included the argument that “highly unlikely events are either ignored or overweight”.

Oversimplified answers:

- People overestimate the probabilities of unlikely events

- People overweight unlikely events in their decisions

The probability of rare event will (often, not always) be overestimated, because of the confirmatory bias of memory.

The example also shows that it is costly to be risk averse for gains and risk seeking for losses.

Recall that professional golfers putt more successfully when working to avoid a bogey than to achieve a birdie. One conclusion we can draw is that the best golfers create a separate account for each hole; they do not only maintain a single account for their overall success.

In the presence of sunk costs, the manager’s incentives are misaligned with the objectives of the firm and its shareholders, a familiar type of what is known as the agency problem. Boards of directors are well aware of these conflicts and often replace a CEO who is encumbered by prior decisions and reluctant to cut losses. The members of the board do not necessarily believe that the new CEO is more competent than the one she replaces. THey do know that she does not carry the same mental accounts and is therefore better able to ignore the sunk costs of past investments in evaluating current opportunities.

Associative memory contains a representation of the normal world and its rules. An abnormal event attracts attention, and it also activates the idea of the event that would have been normal under the same circumstances.

Decision-makers tend to prefer the sure thing over the gamble (they are risk averse) when the outcomes are good. They tend to reject the sure thing and accept the gamble (they are risk-seeking) when both outcomes are negative.

How do you decide? The answer is usually embarrassed silence. The intuitions that determined the original choice came from System 1 and had no more moral basis than did the preference for keeping $20 or the aversion to losing $30. Saving lives with certainty is good, deaths are bad. Most people find that their System 2 has no moral intuitions of its own to answer the question.

Part 5: Two Selves

Memories are all we get to keep from our experience of living, and the only perspective that we can adopt as we think about our lives is therefore that of the remembering self.

We have strong preferences about the duration of our experiences of pain and pleasure. We want pain to be brief and pleasure to last. But our memory, a function of System 1, has evolved to represent the most intense moment of an episode of pain or pleasure (the peak) and the feeling when the episode was at its end. A memory that neglects duration will not serve our preference for long pleasure and short pains.

“This is a bad case of duration neglect. You are giving the good and the bad part of your experience equal weight, although the good part lasted ten times as long as the other.”

Many point out that they would not send either themselves or another amnesic to climb mountains or trek through the jungle – because these experiences are mostly painful in real time and gain value from the expectation that both pain and the joy of reading the goal will be memorable.

Odd as it may seem, I am my remembering self, and the experiencing self, who does my living, is like a stranger to me.

Our emotional state is largely determined by what we attend to, and we are normally focused on our current activity and immediate environment. There are exceptions, where the quality of subjective experience is dominated by recurrent thoughts rather than by the events of the moment. When happily in love, we may feel joy even when caught in traffic, and if grieving, we may remain depressed when watching a funny movies. In normal circumstances, however, we draw pleasure and pain from what is happening at the moment, if we attend to it.

Any aspect of life to which attention is directed will loom large in a global evaluation. This is the essence of the focusing illusion, which can be described in a single sentence: Nothing in life is as important as you think it is when you are thinking about it.

It is logical to describe the life of the experiencing self as a series of moments, each with a value. But this is not how the mind represents episodes. The remembering self, as I have described it, also tells stories and makes choices, and neither the stories nor the choices properly represent time. In storytelling mode, an episode is represented by a few critical moments, especially the beginning, the peak, and the end. Duration is neglected.

Winning a lottery yields a new state of wealth that will endure for some time, but decision utility corresponds to the anticipated intensity of the reaction to the news that one has won. The withdrawal of attention and other adaptations to the new state are neglected, as only that thin slice of time is considered. The same focus on the transition to the new state and the same neglect of time and adaptation are found in forecasts of the reaction to chronic diseases, and of course in the focusing illusion. The mistake that people make in the focusing illusion involves attention to selected moments and neglect of what happens at other times. The mind is good with stories, but it does not appear to be well designed for the processing of time.

Conclusions

The two characters were the intuitive System 1, which does the fast thinking, and the effortul and slower System 2, which does the slow thinking, monitors System 1, and mainints control as best it can within its limited resources. The two species were the fictious Econs, who live in the land of theory, and the Humans, who act in the real world. The two selves are the experiencing self, which does the living, and the remembering self, which keeps score and makes the choices.